This is an edited article, I originally authored this as part of an MSc in International Public Policy.

Title: Policy making is all too often more about policy-based evidence making rather than evidence-based policy making Image courtesy of Steve Buck – https://bit.ly/2HgKsYp

————-

An Introduction

Many imperatives can lead to the utilisation of policy-based evidence (PBE), rather than evidence-based policy making (EBPM) of which this article will seek to explore. Numerous studies have been produced on whether EBPM exists as just figure of speech, or as part of a rational process.

Whilst covering significant ground, this is by no account a fully comprehensive analysis, which would go beyond the scope of this article.

Split into four sections, this article will first examine fact interpretation, and the role this plays in the discourse. Second, the use of policy learning through evaluations, and randomised controlled trials (RCTs) as an instrument for better policy outcomes will be examined. Third, the way policymakers and decision makers understand problems and establish evidence for solutions with the influence of ideology, along with the always present bounded rationality will be discussed. The role ambiguity plays in the EBPM discourse will be highlighted. Finally, an introduction to behavioural public policy will be presented, specifically that of nudges including that of an innovative policy instrument namely payments by results (PbR) and the contribution they create to the discussion.

Many variables can influence a policymaker’s decision making, the main variables will be noted and discussed were possible. However, this article also recognises that there are ever more variables yet to be researched in an increasingly complex world. The principal case study utilised through this article is that of RCTs in the English school system. Consequently, this article will conclude by making an argument that policy-based evidence making is all too prevalent.

Defining and exploring ‘Evidence-based policy making’

Evidence-based policy making is a deeply ambiguous and contested metaphor, differing significantly to the term policy-based evidence. For proponents of the former, the metaphor “evidence-based” suggests that scientific evidence comes first and acts as the primary reference point for a decision (Cairney, 2017). Whilst the underlying definition may be reasonably clear for proponents of EBPM, for its many detractors this provides a lens of “rational” policy making that does not exist in the real world.

For the critics of EBPM, policy-based evidence provides an descriptive and prescriptive lens allowing for the description of anything from a range of mistakes by policy makers – including ignoring evidence, using the wrong kinds, “cherry picking” evidence to suit their ideologies, agendas, and/or producing a disproportionate response to evidence – without describing a realistic standard to which to hold them (Cairney, 2017). For instance, is policy more evidence-informed then based? The definitional problem for EBPM is never really solved, ambiguity always remains over what it is, and each actor defines it differently.

Fact Interpretation: Seperating fact from opinion

Fact Interpretation:

Fact or opinion, clear or muddy?

Muddying the waters to any policy that aims to be more evidence-based is that facts can sometimes be misinterpreted, either intentionally or unintentionally.

As Kisby puts it facts are framed as evidence to support or contradict a particular theoretical claim or hypothesis (Kisby, 2011). Evidence itself can be politicised. Trends do not all go in one direction with governments willing to politicise in line with ideological and political agenda (Kisby, 2011).

Meaning is inscribed through, but also derived from use of evidence, which may be open to a wide range of plausible interpretations (Kisby, 2011). Assumptions based on models and politicisation determine what constitutes as evidence, and in addition with politicisation and assumptions comes hierarchy. The selection of a preferred piece of evidence over another significantly alters the course of a policy at various stages in the policy making process.

Credits to Bilal et al., https://bit.ly/3kbgh33

The role of New Public Management

The rise of New Public Management, ushered in policy learning through evaluations which became commonplace throughout 1980s and 1990s, primarily focusing on the realist method-based evaluation approach. Government ministers have increasingly made use of the term ‘what matters is what works’; with evidence of what works provided through substantially increased research and evaluation programmes and the use of pilot projects to test out new approaches (Sanderson, 2001).

Policy learning through evaluations, and randomised controlled trials (RCTs)

Programmes such as the single work-focused gateway, Health Action Zones (HAZs), and Sure Start were all subject to initial piloting (Sanderson, 2001). Sanderson states that evaluations have been set up to answer two key questions: first, ‘does it work’; and second, ‘how can we best make it work?’ (Sanderson, 2001). The constraints on the ability of evaluations to provide a suitable answer to the first question are that of time, and imperfect information. For example, it can take considerable time for pilot projects to become fully established (Sanderson, 2001).

The political constraints: Being politically bound

Political considerations can constrain the length of time for pilot programmes to operate effectively (Sanderson, 2001). Policymakers may be eager to implement policies for this constraint. When initiatives arise from political manifesto commitments policymakers are impatient to receive results that will provide evidential support for decisions to proceed with full implementation (Sanderson, 2001). Such issues can lead to evidence being misinterpreted, or undue pressure applied to departments working under government producing the evidence.

The importance of neutral (ideologically free), independent institutions comes into its own here as the opportunity for evidence-based policy can be degraded. The case for increased methodological pluralism in policy analysis is strengthened with these considerations.

Understanding problems and establishing evidence

Rationalist approaches suggest that the quality of the evaluation itself should lend itself to evaluation use (Stewart and Jarvie, 2015). However, other more critical approaches stress that policy making is a process based on argumentation, and that evaluation use is best viewed interactively (Stewart and Jarvie, 2015). There is some evidence to support this argument, two-way policy transfer occurs in most policy processes.

Networks are key vehicles for interactive evaluation, while the state is key in transfers it by no means the exclusive actor. International organisations, bureaucrats, non-state actors, and in particular knowledge-based actors are all involved in the export of ideas (Stone, 2001). However, as Stone (2001) argues, the inclusion of think tanks, consultants or other expert groups into a global policy network, ‘doesn’t depend so much on its innovative ideas, but rather upon whether or not it shares a common value system with the government or international organisation that desires the policy transfer to occur’ (Stone, 2001). Key constraints based outside a policymaker’s control may come from outside the state, thus the rationalist approach is inadequate and ignores the realities of politics.

Quantitative data has increasingly been used to inform policy decisions, most notably in the UK education system. Its use has increased significantly since the Conservative-Liberal Democrat coalition came to power.

Reducing ambiguity: A role for Randomised controlled trials (RCT’s)

RCTs are one policy instrument that can be used to reduce ambiguity at a critical phase in the policy process and has been done so with some success. Two notable examples of this success are that of the Education Endowment Foundation (EEF) which has made significant use of RCT’s, commissioning 10% of all randomised controlled trials ever carried out in education research (The Economist, 2017). The other being the UK cabinet Office’s Behavioural Insights Team (BIT). John (2014) highlights a key factor in the BIT’s success is its willingness to work in a non-hierarchical way, its skills at forming alliances, and the BIT’s ability to form good relationships with expert audiences (John, 2014). The two organisations present themselves as evidence-based. Making high use of RCTs and networking effectively can be seen as an example of their organisational confidence in obtaining knowledge that can alter behaviour, certainly, knowing evidence produced will be at the top of any hierarchy is a motivating factor.

Results from RCTs are often referred to as scientific, non-biased and rational by proponents of the method (Wesselink et al., 2014). Although the BIT works in a flat, non-hierarchical way, evidence produced was naturally at the top of the government’s hierarchy up to 2015. Such a hierarchies is created for evidence producing institutions to solve what many studies predominantly focus on, which is; identifying the problem of uncertainty and incomplete information (Cairney et al., 2016).

However, this hierarchy is partly artificially created, the EEF and BIT have had significant government involvement. Raising the question of how much they benefit over other forms of evidence, which may be more reliable but further down the hierarchy, thus widening the divide further between evidence and policy.

Issues around the implementation of RCTs exist, such as randomisation and poor study design. Terry Wrigley, a visiting professor at Northumbria University and Professor Biesta of Brunel University London accuse the EEF’s toolkit of “bundling together” very different studies, carried out in a range of differing contexts, to come up with the average number of months of progress gained, when some of the studies contradict the headline statistic (Smith, 2017). In addition to these issues of randomisation, ideological agendas also feature.

Libertarian paternalism holds that governments ‘nudge’ citizens into making more rational choices (Thaler and Sunstein, 2008, p.47). Nudges and behavioural policies are known as “soft paternalism” with the aim to not upholding the “nanny state”, which is of appeal to both libertarians and conservatives (The Economist, 2014). The BIT which was established under the Conservative-Liberal Democrat coalition, was initially created in June 2010 as a unit within the Cabinet Office (John, 2014). The institutional norms created by the establishment of the BIT, and the large amount of work it does with central government departments means it can hardly be termed as neutral ideologically (John, 2014). Furthermore, Policymakers may be resistant to innovation and new policies because they may be associated with younger post holders whose careers might benefit (John, 2014).

Nevertheless, it would appear the UK is pushing and adopting evidence from RCTs and behavioural studies more so then other countries. Van Deun et al (2018) confirm this explaining comparatively wider attention within academia and the government for the subject, and the establishment of ‘nudge units’ in both countries (Van Deun et al., 2018). They also state that worldwide 40% of the articles are linked to health policies and almost 20% relate to environmental policies, with around 2% on education (Van Deun et al., 2018). For instance, EEF claims it has commissioned 10% of all randomised controlled trials ever carried out in education research, with a third of English state schools involved in at least one of its trials (The Economist, 2018). The UK is taking the lead in certain sectors of behavioural policy research.

Interestingly, Van Deun et al point to the small size of field experiments, which seems to suggest researchers are only limitedly involved in applying nudges in public policies and in testing the effects of interventions (Van Deun et al., 2018). Naturally, a question as to who is applying nudges in public policies, and who is testing the effects of interventions comes about? However, it is noted that the application of nudges in public policy is limited, and nudge policies do still constitute a new field of study. The UK is also pioneering research in education; however, it lacks any comparison. Lack of comparison or choice in this sector is a significant argument against EBPM.

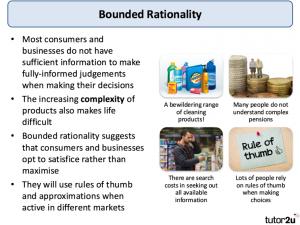

A explanation of Bounded Rationality courtesy of tutor2.net

Bounded rationality: Are policymakers bound?

The way policymakers understand problems and establish evidence for solutions is a key factor in determining whether policy is evidence informed or driven by policy itself. In formulating policy there are significant limits to a policymaker’s attention, ‘solutions’ often exist before ‘problems’ and human knowledge is necessarily incomplete and fragmented (Parsons, 1995, p.278). Often policymakers are satisficying rather than maximising, fallible, in an open setting, with ambiguous scope, imperfect information, and with limited time (Simon, 1965, p.45). A policy maker often is operating in a world of bounded rationality which means they are bounded due to social differentiation. Problem interpretations are differentiated, for example, there are situations where clients can perceive a problem differently to staff, and the decision maker can appreciate aspects of a situation that either clients or staff do (Forester, 1984).

Information is never perfect…

In this situation, information is not only imperfect, but it is of highly varying quality, in equally varying locations, with less than simple and direct accessibility (Forester, 1984). As time is a socially variable resource, different actors have different amounts of it to devote to decision or problems at hand (Forester, 1984). Satisficying is no longer adequate in this situation, it needs to be supplemented by the use of social intelligence networks where decision-making, and evidence is produced in a socially differentiated environment. Satisficying, which is selecting evidence that is suboptimal to form a policy over the optimal solution poses a challenge to EBPM. This may have occurred in the trial of signature placement by a local authority that did not confirm Dan Arieley’s argument that signatures at the top of documents increased compliance (John, 2013).

The vast amounts of data collected which is required to nudge an individual in a chosen direction should mean evidence-informed or evidence-based policy should be a given. As what is learned about individuals is fed into other policy amendments, however, as depicted policy learning is complicated, with bounded rationality being just one of the many complications. Thaler and Sunstein explain that as alternatives increase and/or vary on more dimensions, policymakers are likely to adopt simplifying strategies (Thaler and Sunstein, 2008, p.232).

Increased data means more choices. Thus, choice architecture matters to policymakers when formulating a policy, as good choice architecture will provide structure and structure affects policy outcomes (Thaler and Sunstein, 2008, p.92). Whilst it may be true that evidence-based/informed policy will increase with more choice, critically, we know in the real world a policymaker is bounded. So, the question comes to what extent is the policy evidence-based? And as more evidence is presented to the policymaker, might this in fact degrade real evidence-based policy? These are the important pressing questions.

An introduction to behavioural public policy: Payment by results (PbR)

PbR is another innovative policy instrument, with PbR payments are dependent on independent verification of results. Payment by results allows the government to pay a provider of services on the basis of the outcomes their service achieves rather than the inputs or outputs the provider delivers (Puddicombe et al., 2012). The definition of what a result consists of is a key issue, as it defines what type of evidence constitutes as an acceptable result.

In the follow up of their seminal paper titled ‘Payment by results and social impact bonds in the criminal justice sector: New challenges for the concept of evidence-based policy?’ (Fox and Albertson, 2011). Fox and Albertson state that the Ministry of Justice notes there are several measures of re-offending which might be taken as indicators of success of a PbR scheme (Puddicombe et al., 2012). Fox and Albertson go on to claim that to date a simple, binary measure of proven re-offending seems to be their preferred option (Puddicombe et al., 2012). There is no reference as to how the Ministry of Justice chose this measure from other measures that were available to them. Nevertheless, as discussed previously, an adequate choice architecture provides efficient structure.

It can also be argued that such a definition ignores the complexity of individual’s journey to desistance, which might involve initially moving to less serious or less frequent offending (Puddicombe et al., 2012). Such definitions that are headline targets can be counterproductive, such as in education and the health sector for example, and other sectors where success is not easily measured (Puddicombe et al., 2012). These drawbacks suggest simply shifting emphasis from inputs to outcomes is not the solution to this problem. In addition, choice and hierarchy issues are still present and still a factor for a decision maker in pursuit of a more evidence-based policy.

Considerable research has been undertaken on efficiency and savings. Albertson and Fox point out that the formal evidence base and the personal experience of practitioners suggest modest reductions in re-offending are more likely (Puddicombe et al., 2012). Savings may be too small to identify with such an instrument being implemented. As much as the economics may be called into question, so too the social effects, and the unintended consequences. Corry points to the strengths of PbR, that a provider of services will go the extra mile to try and hit outcome targets (Puddicombe et al., 2012). However, this can also lead to undesirable and/or unexpected behaviours (Puddicombe et al., 2012). Pointing to the temptation to ‘park and cream’, providers may work hardest with those that they think they can get over the line and so achieve outcome targets and associated payments (Puddicombe et al., 2012). This behaviour can be desirable and comes naturally but it can mean for example that those offenders who need help are ignored. The real setback is that prime contractors (often for-profit organisations) will pass the toughest cases on to charity sub-contractors (Puddicombe et al., 2012).

The temptation to alter figures is also present. If top-down pressure is applied in an organisation to hit specified targets, then actors in the process may be tempted to make sure data (evidence) says what those at the top of the hierachy want to hear. The answer to such a problem, is openly accessible data. Corry states the more publicly available the less room for this to happen, as people can monitor and pick up on fraud more easily (Puddicombe et al., 2012). Overall, the focus on improving efficiency and cost cutting could push governments to use PbR, especially a government driven more by ideology.

If a government is driven significantly by ideology and has tough manifesto commitments it intends on following through, then it is doubtful that this would lead to evidence-based/evidence-informed policy outcomes. A present example is that of the UK’s withdrawal from the EU, where the UK government is pursuing a policy that most sources state will leave the UK worse off economically (Crerar, 2018). However, the present government is following through on the 2016 June Referendum with government ideology and interpretation, playing a significant role in what sort of result the UK is likely to have. It can certainly be said that examples of policy-based evidence making exist at the local, national, and international sphere.

What this discussion presents

This article has shown that evidence-based policy making is an extremely contested arena, with no consensus on any definition. This leaves a tricky starting point on which to discuss what evidence-based policy making really is. Evidence-based policy making as a metaphor has many detractors. Arguing that it provides a lens “rational” policy making that does not exist in the real world, to the question of whether policy is more evidence-informed then based.

Framed, or unintentionally misinterpreted further adds to the contestation. Governments politicise, individuals and groups have agendas, and ideologies in the evidence “soup”. Assumptions based on models and politicisation determine what constitutes as evidence, and with such politicisation and assumption comes hierarchy.

This article has shown that policy learning, through evaluations is increasingly complex. By focusing on the realist method-based evaluation approach, and the phrase ‘what matters is what works’ the use of RCTs as a means of generating evidence has become more common. However, this article has highlighted that political considerations can constrain the length of time pilot programmes operate considerably. The importance of independent institutions cannot be underestimated in such instances, as is its effect on evidence produced.

The increased use of RCTs in most nudging and payment by results implementations are all heavily dependent on structured evidence. The increased use of RCTs can in some instances allow for greater evidence-informed policy and delivery, however, this article has argued that hierarchies can influence a decision maker negatively. RCT evidence is generally at the top of any government hierarchy and is often selected for use first because it is seen as reliable, without taking into account how the evidence was produced or whether the organisation benefited from significant government involvement. RCTs are also open to poor design and issues with randomisation are present, how “random” are they for instance.

Nudging and PbR present opportunities for more evidence-informed policy. As the push for evidence to hit specified targets is necessary, however, ideology and interpretation can influence PbR implementation and outcomes. However, this article has shown the push for comprehensive rationality is not achievable. In addition, it has been demonstrated a decision maker is always bound in the real world.

References

Cairney, P., Oliver, K. and Wellstead, A. (2016) ‘To Bridge the Divide between Evidence and Policy: Reduce Ambiguity as Much as Uncertainty’, Public Administration Review, vol. 76, no. 3, pp. 399–402 [Online]. DOI: 10.1111/puar.12555.

Crerar, P. (2018) Each Brexit scenario will leave Britain worse off, study finds | Politics | The Guardian [Online]. Available at https://www.theguardian.com/politics/2018/apr/18/each-brexit-scenario-will-leave-britain-worse-off-study-finds?CMP=share_btn_link (Accessed 23 April 2018).

Fox, C. and Albertson, K. (2011) ‘Payment by results and social impact bonds in the criminal justice sector: New challenges for the concept of evidence-based policy?’, Criminology & Criminal Justice, vol. 11, no. 5, pp. 395–413 [Online]. DOI: 10.1177/1748895811415580.

John, P. (2013) ‘Policy entrepreneurship in UK central government: The behavioural insights team and the use of randomized controlled trials’: Public Policy and Administration [Online]. DOI: 10.1177/0952076713509297 (Accessed 17 April 2018).

Kisby, B. (2010) Interpreting Facts, Verifying Interpretations: Public Policy, Truth and Evidence – Ben Kisby, 2011 [Online]. Available at http://journals.sagepub.com/doi/abs/10.1177/0952076710375784 (Accessed 11 April 2018).

Parsons, W. D. (1995) Public policy, Brookfield, Vt, Edward Elgar Pub.

Puddicombe, B., Corry, D., Fox, C. and Albertson, K. (2012) ‘Payment by results: Bill Puddicombe, Dan Corry, Chris Fox and Kevin Albertson debate the merits and disadvantages of payment by results’, Criminal Justice Matters, vol. 89, no. 1, pp. 46–48 [Online]. DOI: 10.1080/09627251.2012.721982.

Sanderson, I. (2002) ‘Evaluation, Policy Learning and Evidence‐Based Policy Making’, Public Administration, vol. 80, no. 1, pp. 1–22 [Online]. DOI: 10.1111/1467-9299.00292.

Smith, E. (2017) Exclusive: EEF toolkit ‘more akin to pig farming than science’ | News [Online]. Available at https://www.tes.com/news/school-news/breaking-news/exclusive-eef-toolkit-more-akin-pig-farming-science (Accessed 17 April 2018).

Stewart, J. and Jarvie, W. (2015) ‘Haven’t We Been This Way Before? Evaluation and the Impediments to Policy Learning’, Australian Journal of Public Administration, vol. 74, no. 2, pp. 114–127 [Online]. DOI: 10.1111/1467-8500.12140.

Thaler, R. H. and Sunstein, C. R. (2008) Nudge: Improving Decisions about Health, Wealth, and Happiness, New Haven, USA, UNITED STATES, Yale University Press [Online]. Available at http://ebookcentral.proquest.com/lib/gmul-ebooks/detail.action?docID=4654043 (Accessed 20 April 2018).

The Economist (2014) Nudge unit leaves kludge unit – The market for paternalism [Online]. Available at https://www.economist.com/blogs/freeexchange/2014/02/market-paternalism (Accessed 17 April 2018).

The Economist (2018) England has become one of the world’s biggest education laboratories – The big classroom experiment [Online]. Available at https://www.economist.com/news/britain/21739671-third-its-schools-have-taken-part-randomised-controlled-trials-struggle-getting (Accessed 20 April 2018).

Van Deun, H., van Acker, W., Fobé, E. and Brans, M. (2018) Nudging in Public Policy and Public Administration: A Scoping Review of the Literature | The Political Studies Association (PSA) [Online]. Available at https://www.psa.ac.uk/conference/psa-annual-international-conference-2018/paper/nudging-public-policy-and-public-0 (Accessed 21 April 2018).

Wesselink, A., Colebatch, H. and Pearce, W. (2014) ‘Evidence and policy: discourses, meanings and practices’, Policy Sciences, vol. 47, no. 4, pp. 339–344 [Online]. DOI: 10.1007/s11077-014-9209-2.